Your iPhone comes with powerful assistive technology (AT) built in—useful for checking how well your mobile app is supporting accessibility. When mobile apps don’t work well with one AT, they often don’t work with others.

What is Voice Control?

All iOS and iPadOS devices include Voice Control (VC) speech input functionality. Enabling VC allows a person to speak commands to interact with the screen instead of tapping on the screen directly. VC users can say, “Tap $Name” to activate interactive controls without speech flags enabled. To see speech flag hints, VC users can say, “Show names” to display the first word of the accessible name. They can say, “Show numbers” to display a numbered speech flag next to each interactive control instead. For greater control on tight screens, VC users can also say, “Show grid” to display a grid from 1 to 36 squares and say, “Tap $Number” to tap on a square.

Supporting VC means ensuring all buttons and controls in mobile apps have accessible names and that the accessible name contains the visible text label. Speech flags should not appear next to text that is not interactive. VC users must be able to perform all the same actions as screen tap users without VC enabled.

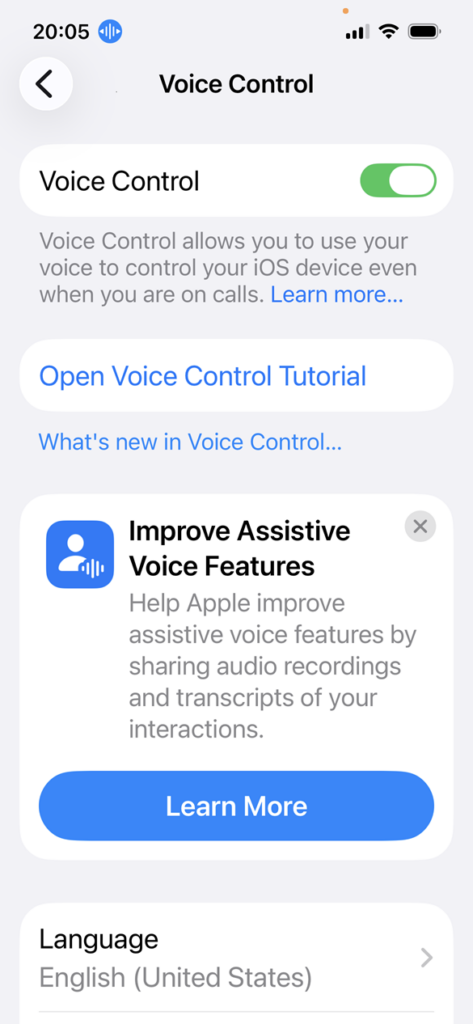

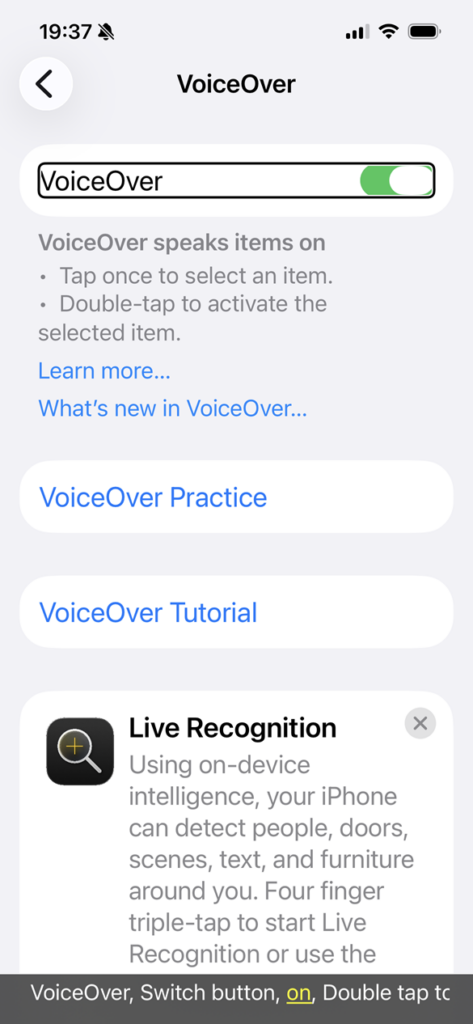

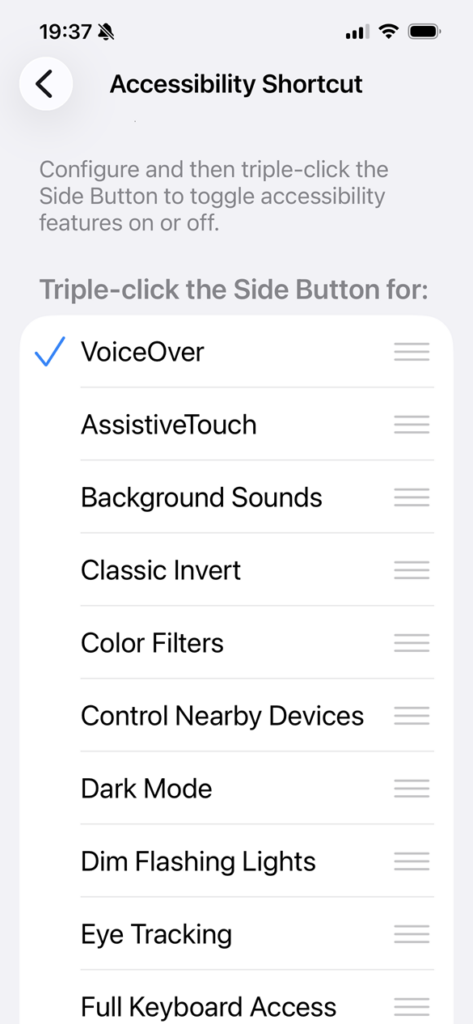

Enabling Voice Control on iOS

Be cautious when enabling any AT on a mobile device. Make sure you understand how to disable it again so you don’t get stuck, unable to navigate the device.

- Settings app > Accessibility > Voice Control > Toggle Voice Control switch on/off

- Settings app > Accessibility > Accessibility Shortcut > Select Voice Control from the list

- Ask Siri, “Enable/disable Voice Control”

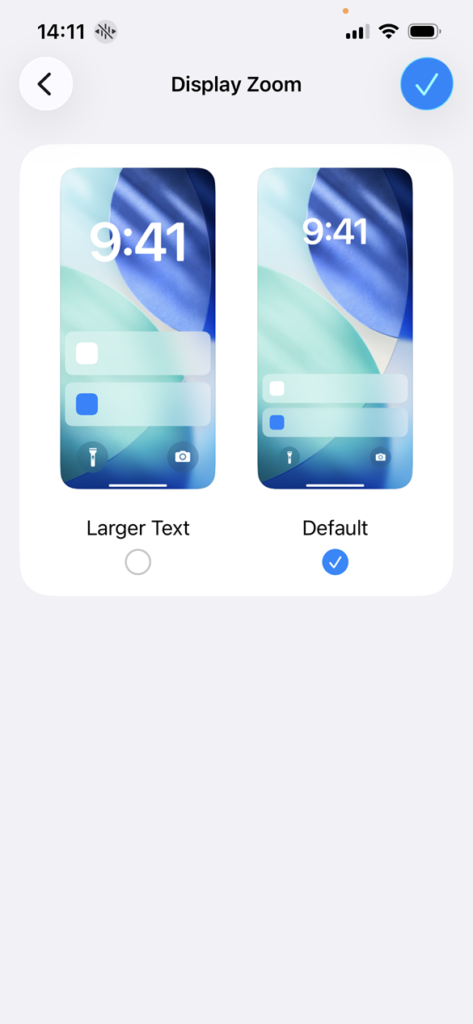

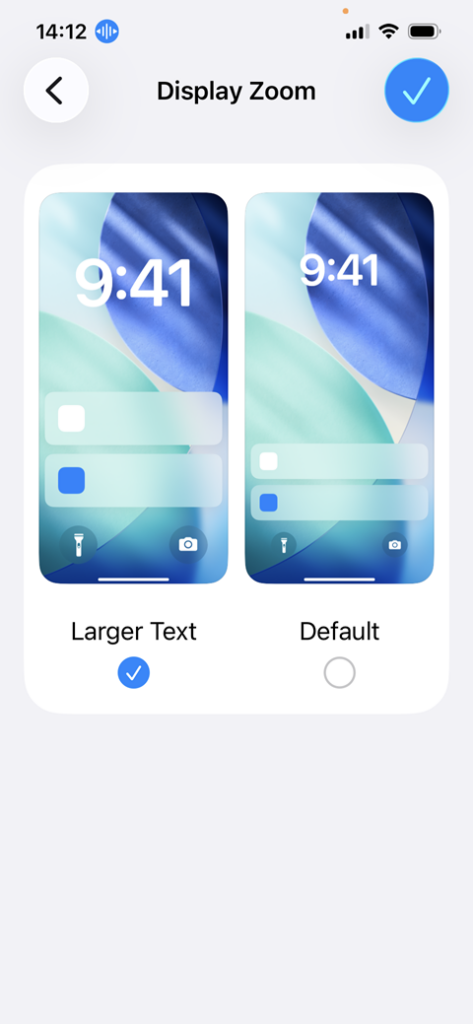

My preferred way to turn VC on and off is to ask Siri to “enable/disable Voice Control”. Once VC is enabled, a circle icon appears to the right of the phone time in the upper left of the screen. When this icon is blue, VC is listening for commands. When this icon is gray, VC is sleeping but the microphone is still on which is displayed with a tiny orange dot above the cellphone strength indicator.

Using Voice Control

- Say: Show names

- Say: Tap $Name

- Say: Show numbers

- Say: Tap $Number

- Say: Show grid

- Say: Stop listening to keep Voice Control enabled but not active

- Say: Start listening to wake up Voice Control

Video: Voice Control navigation example

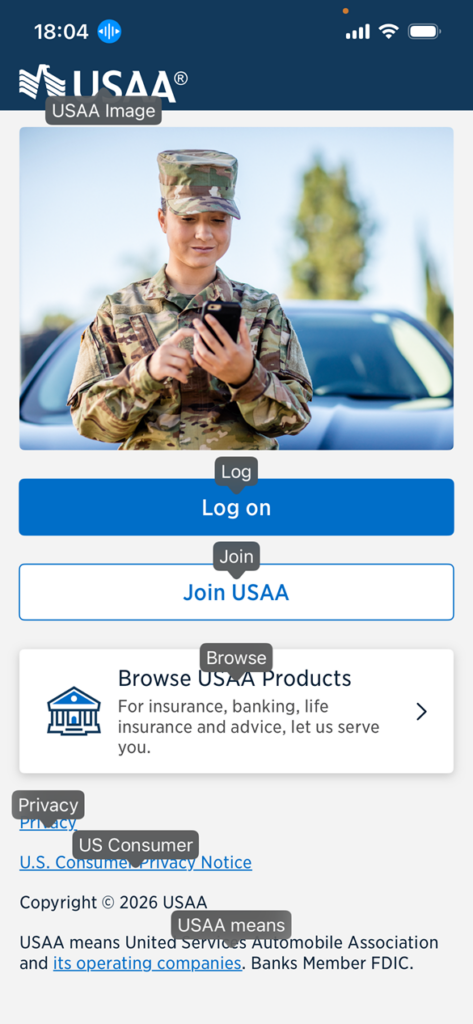

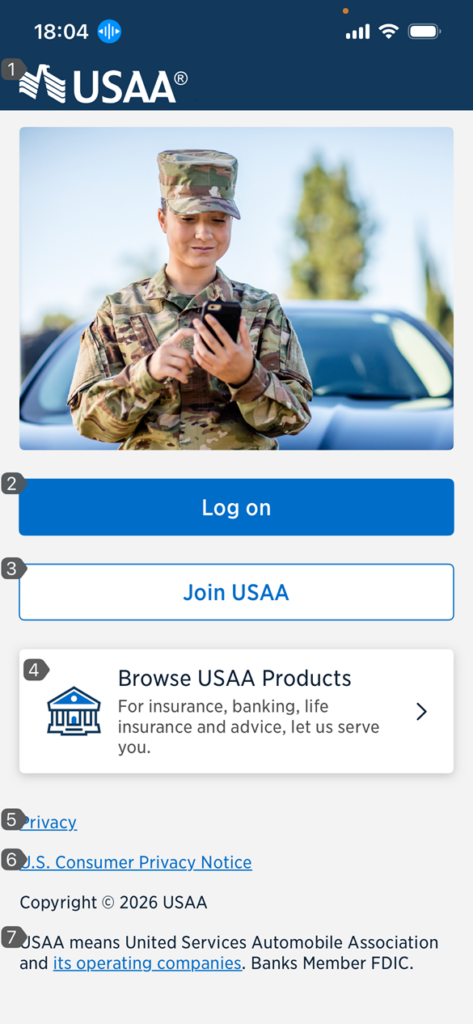

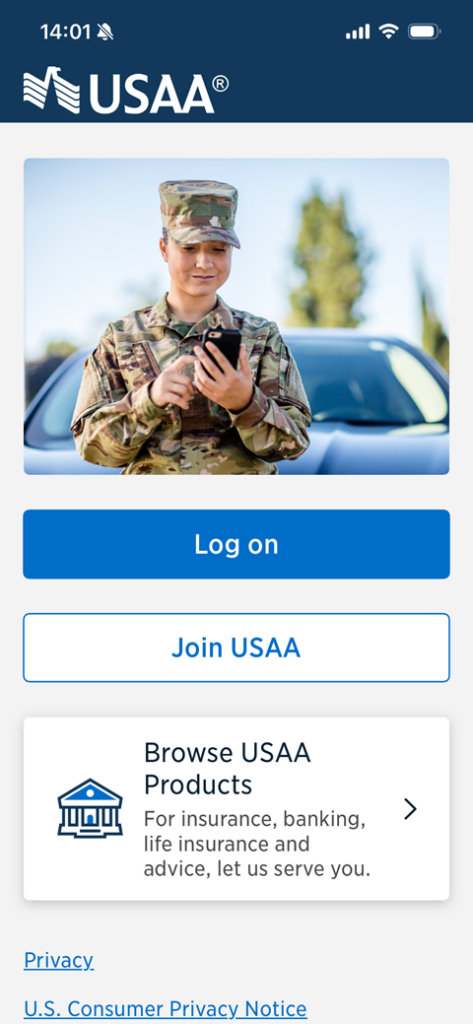

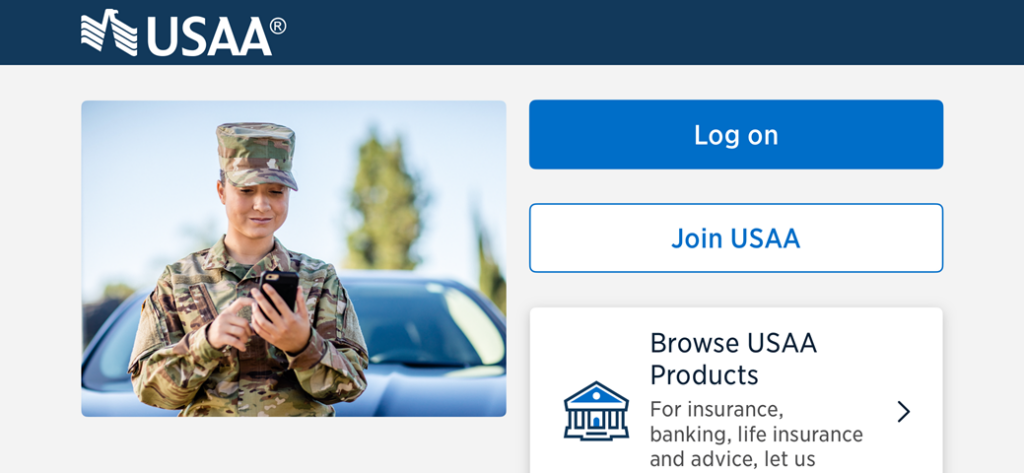

A Voice Control user opens the USAA iOS app to the landing screen and says, “Start listening”. The command appears on the screen and numbered speech flags appear next to the interactive controls like a “Log on” button. Next the user says, “Tap 4”. The number 4 speech flag is on a button labeled “Browse USAA Products”. The Products screen opens with numbered speech flags next to the interactive controls. The user says, “Tap 1” to active the close button and return to the previous screen.

The user says, “Show names” and the first word of the accessible names of interactive controls appear as speech flags. Next the user says, “Tap log on” and the login form screen appears with speech flags with words next to the interactive controls. The user says, “Tap Close” to active the close button and return to the previous screen.

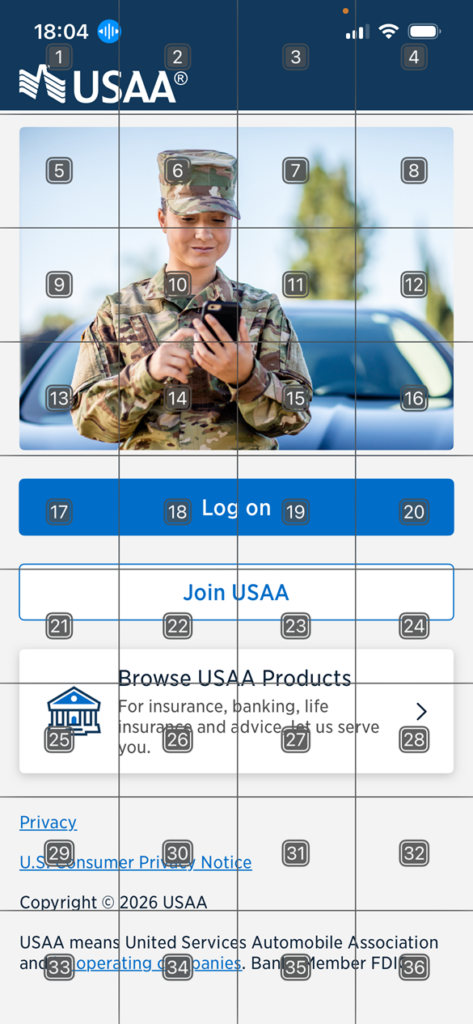

The user says, “Show grid” and a grid from 1 to 36 numbered squares appears on top of the screen content. Next, the user says, “Tap 22” to activate one of the grid squares overlaying the “Join USAA” button. The Join USAA screen appears with a grid of numbers. The user says, “Tap close” to activate the close button and return to the previous screen.

Related articles

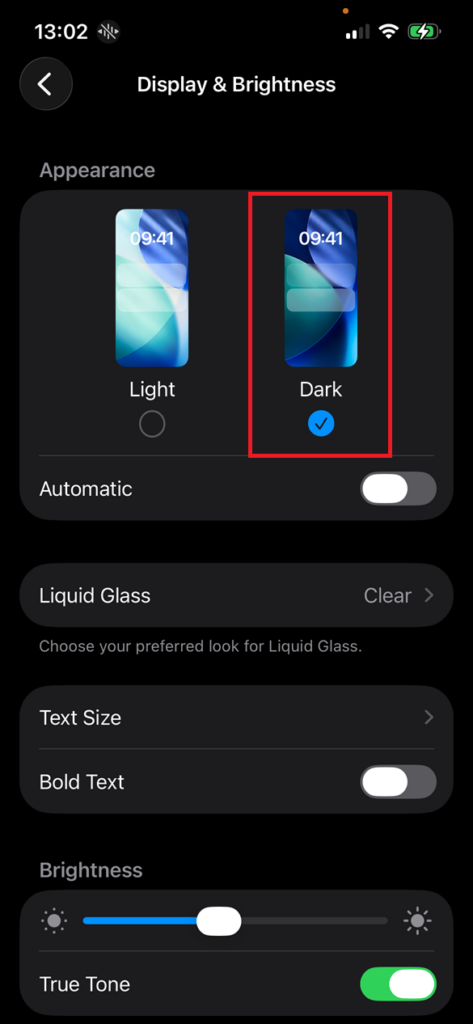

- 4 iOS display settings to check your app with

- AT for iPhone: VoiceOver screen reader

- AT for iPhone: Voice Control speech input

- AT for iPhone: Full Keyboard Access (25 May)

Screenshots and videos taken on an iPhone 16e running iOS 26.4.